Platform Architecture

ImAc has developed and end-to-end platform that augments broadcast services with accessible immersive content delivered via broadband, allowing for rich interaction and hyper-personalisation.

The platform is based on standards-compliant extensions to current technologies and formats, and it is backward compatible to current resources and common practices within the broadcast sector.

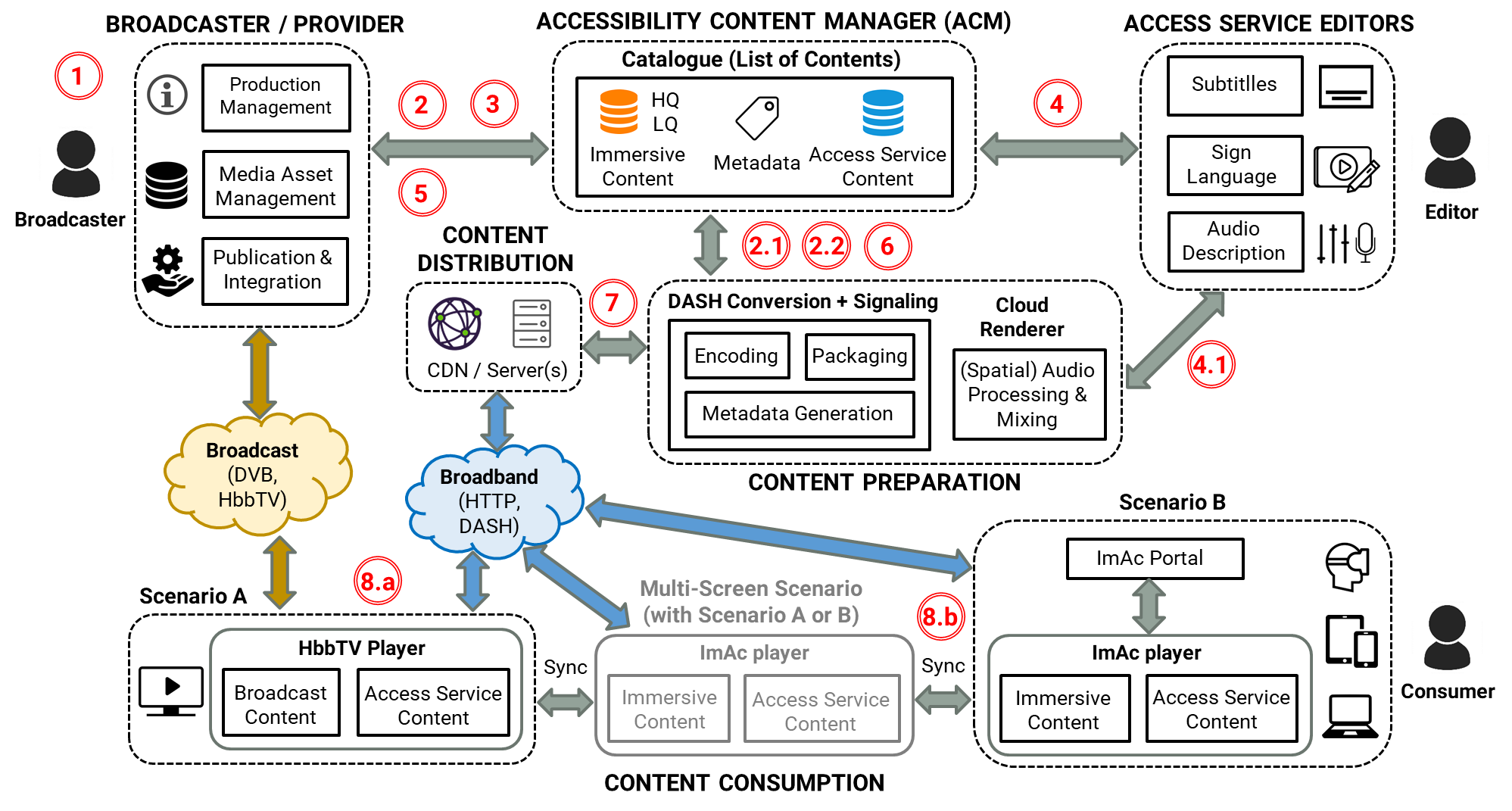

The figure below provides a high-level overview of the end-to-end ImAc platform, comprised of individual tools for the production, editing, management, preparation, delivery and consumption of immersive and accessibility content. In particular, the main components of the ImAc platform are:

- Accessibility Content Manager (ACM) – described in detail in Deliverable 3.2.

- Web-based access service editors – described in detail in Deliverables 4.1 (Subtitling Editor), 4.2 (Audio Description Editor) and 4.3 (Sign Language Editor).

- Content Signaling and Packaging tool – described in detail in Deliverable 3.3, with access to the source code

- Web-based VR360 player – described in detail in Deliverable 3.5, with access to the source code.

The figure below provides a high-level overview of the platform architecture, which is comprehensively described in Deliverable 3.1. Well defined metadata models and open APIs have been designed and implemented to allow an effective interaction and communication between the platform components.

Workflow

Next the main steps and processes (represented in red circles in the figure above) that are necessary to integrate new content onto the ImAc platform are outlined:

1. Immersive content is produced / imported by the broadcaster / content provider.

2. Content ingest: The broadcaster updates a High Quality 360º video to the ACM

2.1 A Low Quality version is automatically generated, to be used by the editors

2.2. A thumbnail and cover of the 360º video are automatically generated

NOTE: These files, together with existing access service content, can also be imported via the ACM

3. The broadcaster initiates the production of access service content (e.g., by sending notifications to the editors).

4. The professional editors create the access service content

4.1. Spatial audio is processed, and multiplexed if necessary, by the Cloud Renderer

5. The broadcaster (e.g. Q&A department) validates the created access service content

6. Content is prepared for distribution

7. Content is published to the distribution channels

8. Content consumption via the ImAc player

8.a. HbbTV Scenario: The TV broadcast program is augmented with IP-delivered content presented on companion screens

8.b. Web Scenario: Content is selected via the ImAc portal (website), with the option of linking companion screens

These workflow steps and processes are explained with further details in Deliverables 3.1 and D3.6.

The platform architecture and workflow are also summarised in a factsheet and a poster.