When developing tools for accessibility it is essential to ensure that the applications that you build are also fully compatible with other already existing technologies that consumers are already using. A key requirement identified within the ImAc project was the need for voice control. We have therefore built a prototype to demonstrate how existing voice control hardware can be used to control the ImAc player.

The prototype uses a 3rd Generation Amazon Echo.

Overview

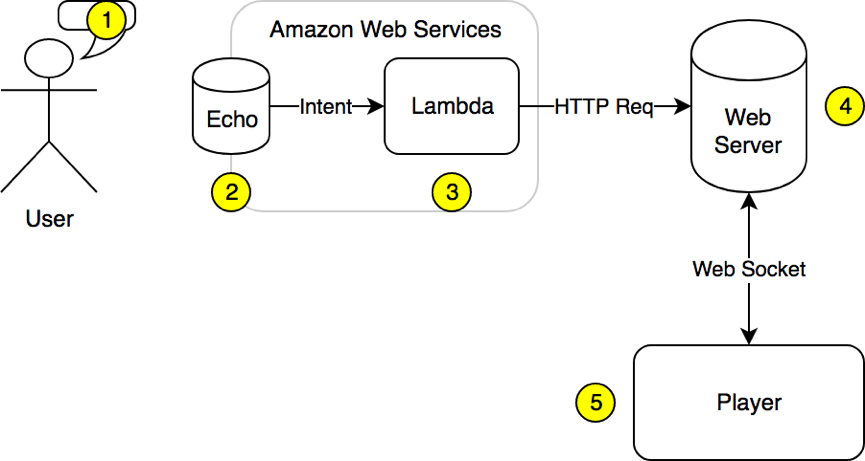

The basic flow of the prototype interface is as follows:

- The User

The user issues commands to Alexa. An application built for Alexa is referred to as a ‘skill’ and you begin interacting with a skill by issuing the command ‘[Wake Word] open [skill invocation name]. In our prototype the wake word was set to ‘echo’ and the skill invocation name was set to ‘ImAc’ so in this demo the user would start the interaction by issuing the command ‘Echo open ImAc’.

- The Echo

The Alexa skills are built within the Amazon Web services framework. Each skill contains a number of ‘Intents’ where each ‘intent’ is designed to trigger a specific event, however there maybe multiple phrases that could be used to derive the same action. Variables such as numbers can also be defined within intent.

- AWS Lambda

The identified intend name is posted to Lambda. This is an AWS application, which allows you to run JavaScript code within AWS in a server-less manner. In essence what this does is to bridge each ‘intent’ from the Echo and forward it directly to our ImAc webserver. It also formulated a response, which is the spoken response returned to the User. It does this by sending an HTTP POST request to the webserver with the current intent.

- Web Server

When the player starts is opens a connection to our webserver. The webserver can then use this web-socket to forward the command to the player.

- Player

The player receives the command to ‘play’, ‘pause’ etc. and behaves appropriately.